ChatGPT’s success could have come sooner, says former Google AI researcher

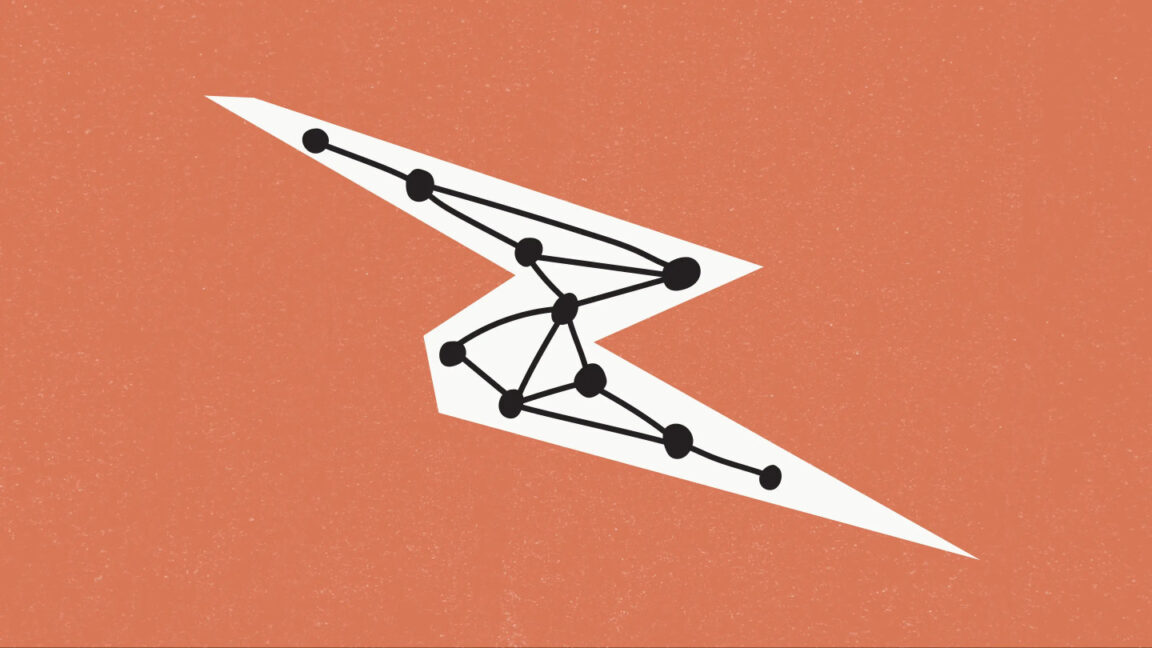

In 2017, eight machine-learning researchers at Google released a groundbreaking research paper called Attention Is All You Need, which introduced the Transformer AI architecture that underpins almost all of today's high-profile generative AI models.

The Transformer has made a key component of the modern AI boom possible by translating (or transforming, if you will) input chunks of data called "tokens" into another desired form of output using a neural network. Variations of the Transformer architecture power language models like GPT-4o (and ChatGPT), audio synthesis models that run Google's NotebookLM and OpenAI's Advanced Voice Mode, video synthesis models like Sora, and image synthesis models like Midjourney.

At TED AI 2024 in October, one of those eight researchers, Jakob Uszkoreit, spoke with Ars Technica about the development of transformers, Google's early work on large language models, and his new venture in biological computing.

© Jakob Uszkoreit / Getty Images